Last week a small, Boston-based firm prepared to release a report about the financial status of nearly 1,000 American colleges and universities. Inside Higher Ed prepped an article on Edmit’s report.

In this post I’ll try to summarize my best understanding of what occurred, then explore what on Earth this might mean for post-secondary education. As always I’m eager to hear your thoughts.

Edmit built an analytical model of campus financial sustainability. According to IHE, this rested on “four primary variables: investment return on endowment funds, tuition prices, tuition discounting and faculty and staff member salaries.” Edmit fed IPEDS data from “946 private colleges” into the model, added qualitative information, then produced a report on each school’s likely financial future.

Edmit built an analytical model of campus financial sustainability. According to IHE, this rested on “four primary variables: investment return on endowment funds, tuition prices, tuition discounting and faculty and staff member salaries.” Edmit fed IPEDS data from “946 private colleges” into the model, added qualitative information, then produced a report on each school’s likely financial future.

A key detail of those college reports concerned the chance of each one closing:

The projections used qualitative and quantitative data, from federal sources, to estimate how long before the net expenses for the 946 private colleges exceeded their net assets. After that, the model assumed the colleges would fail, because no enterprise can continue to operate without taking in enough to pay its bills. The model provided that information in a single number so it would be accessible. That number was the estimated time until closing for each college. [emphases added]

In 2019 Edmit further developed this analytical tool in-house, supplementing it with an open source project:

The company’s co-founders planned to publish the projections on Github, a platform for open-source projects, under its own logo and the Inside Higher Ed banner. The source code was available, and a lengthy explanation was planned, saying the list was an early measure, that its developers were seeking feedback and potential improvements, and that students and parents shouldn’t base college decisions on it.

Inside Higher Ed started researching the Edmit effort and offered an opinion piece to the firm. This journalistic work alerted campuses and academic organizations to the analytical tool’s existence. Some reacted with lawyers and legal challenges to both Edmit and Inside Higher Ed. As a result Edmit called off the release, and IHE published the somewhat disappointed article linked above.

What were the colleges’ arguments against this forecasting tool?

Some questioned the data as inaccurately describing a given campus’ future:

Pete Boyle, a spokesman for NAICU, said via email[:] “How much sense does it make that four short-term data points can define a college’s long term future, and that colleges do not change and adapt to challenges over time?”

There were other criticisms about the project’s formal features:

The report on the methodology lacked specificity, explanation and breadth, Boyle and others said. The supporting data regarding school closures were questionable, the background research on the choice of explanatory variables and method was lacking, and supporting arguments for choosing the variables were absent.

As one university’s counsel reportedly put it:

“It would be reckless for a respected higher education publisher such as Inside Higher Ed to make such predictions based on old, incomplete, and inaccurate data and an admittedly flawed model,” the lawyer [for Herzing University] wrote.

Another college went further than charging recklessness: “Utica [College] threatened to sue if Inside Higher Ed published an article on Edmit’s projections.”

Perhaps the strongest criticism was that the publication of such forecasts could harm some of the colleges being researched. (From the IHE article: “One college president emailed with the subject line ‘IHE Article Puts Students and Colleges at a Greater Risk?'”) For example, a prediction that a campus was likely to close in ten years, say, would seriously depress student applications, faculty and staff applications and morale, and charitable giving… all of which would speed the institution’s decline along. Put another way, the public act of observing a college could alter its status (on Twitter I called this a kind of Heisenberg effect) In this view, Edmit’s research could close campuses.

As Paul Fain speculates,

Others may have felt their colleges were on the brink of collapse and had to fight against unflattering media coverage with every available resource or risk that collapse accelerating.

Or as commentator Karen Gross argues, the “[l]ist would close off admissions substantially and quickly, well in advance of demise…”

Are these arguments correct? To an extent we can’t fully assess them, since the Edmit analytical tool is still in the dark. But working with what we have, we do have to wonder if such a report could have done harm to colleges already teetering on the financial brink. If we arrive at that conclusion, then keeping the analysis from the light of day was the correct action.

On the other hand… to begin with, Edmit and its data advisory group stand by their data collection and analysis.

Ducoff and Manville… tried to avoid false positives, such as by not requiring a cash cushion for colleges in the forecasts. That means the model was too conservative in some cases. For example, Mount Ida was projected to last indefinitely.

They also argued for some proven accuracy, based on recent history:

While the projections might not fully capture the financial health of some colleges, Edmit had evidence that the forecasts could be accurate. That’s because several colleges included in the modeling tool have shut down during the last several years. Almost all of those colleges had precarious finances, according to the projections.

Here are the model’s estimates for how long it would be before those college would have been at risk of closing: Southern Vermont College (four years), Green Mountain College (six years), Marylhurst University (six years), Concordia College of Alabama (six years), Marygrove College (seven years), Newbury College (seven years) and Grace University (seven years).

Remember, too, the open source supplement, which would give people the chance to improve both data and model. Or to fork their own.

Further, the Edmit group argues that students should have access to such information, given the important decisions they make about attendance. I might put it another way. If someone attends a college and it collapses during or after their studies, wouldn’t they have preferred to have known the risks ahead of time? Try this question on for size: is it unethical to block access to such reports from students deciding where to enroll?

If your college is so on the brink that this report could bring it down, you should be seriously think about responsibly closing your college via merger or teach-out… We think it is horrible when an airline conceals financial problems and leaves its passengers stranded. Concealing financial problems from students is several times worse.

Perhaps we can reconcile these opposing claims of help versus harm, open against discretion. Maybe a public entity, rather than a private one, could take responsibility for data gathering, analysis, and publication? There might be something of a precedent in a new Massachusetts law, which gives that state more authority to suss out campuses’ financial health. It also seems to have provisions for doing so out of the public eye, when necessary. Could state governments pick up the Edmit tool and apply it sub rosa, letting officials quietly contact colleges and universities to either help them survive or wind them down with a minimum of harm? Or a (post-Trump, post-DeVos) Department of Education could conduct such an analysis. Alternatively, this might be a function performed by non-state actors, such as accrediting agencies. They might have more flexibility, especially when it comes to public records laws. (sibyledu, a fine commentator on this blog, has some good thoughts here)

So we have three choices:

- Continue as things are now;

- Edmit publishing their model;

- A public or private agency doing #2.

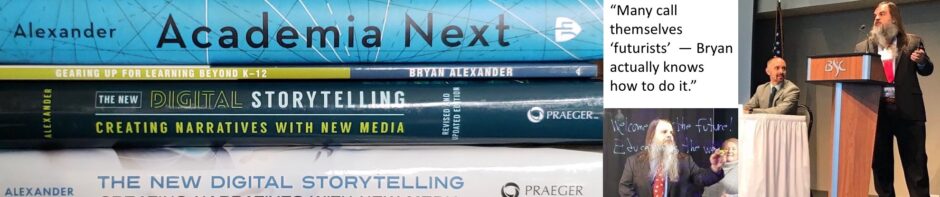

On a personal and professional note, I confess to reading this story with growing alarm. As a futurist, I also gather quantitative and qualitative data about higher ed, and use it to help everyone involved think more effectively about what’s next for colleges and academia. Readers know my forecasts are sometimes dark.

But that work is aimed at the entire higher education sector, or large swathes of it. No single campus has (so far!) accused me of harming its fortunes through my research. This may be due to American academics’ tendency to not think as members of an industry or sector; instead, we usually see ourselves as part of one institution (Tweet College) or a single profession (biology). Academics outside the United States may disagree with my forecasts, but none have charged me with offering harm to their universities. When I consult with individual campuses, they usually keep my work in-house. I’ve had to sign NDAs for several.

And yet this might be too rosy a view. One passage from the IHE article struck me:

“To look 10 years down the road in higher education is dangerous (what will happen with HEA, for example?),” [Pete Boyle, a spokesman for NAICU] said, referring to the long-delayed reauthorization of the federal Higher Education Act. “And to look 50 or 100 years down the road is worse.”

Dangerous. Quite a word to use here. He’s not saying such forecasting is difficult to do, as many would charge (including myself!). Here Boyle isn’t deeming the work unlikely to be reliable, but instead to be actually dangerous.

I don’t know if we’ll see more of this danger charge levied in the near future. It might seem an ill-considered charge, given the demand for more information and transparency about higher ed. Forecasters also might suffer the traditional fate of not being listened to – I don’t mean ignored, but simply unread and unheard.

However, the danger charge might recur, given the increasing fragility of a big chunk of American higher ed. People in leadership or supporting positions at challenged colleges could conclude that bad press is what’s to blame, either honestly or because it’s much easier to dun media than save institutions. Those of us who augur potential decline in the sector could be targeted, accused of making the situation worse. Some might oppose our efforts to increase knowledge, to boost conversation as having the opposite effect. Books like this forthcoming one might take hits as being deleterious – not to the debate, but to higher ed’s health.

We futurists might be wise to be very, very careful.

In the meantime I will insist, despite everything, in supporting conversation. Please add your thoughts to the comment box below.

More to say but one quick reaction was to wonder what Ray Schroeder would make of this…and then if the particular structure of the project made it seem more threatening than what either of you have been doing for some time now.

Greater transparency and distilling financial stability to a single number (in this case # of years of solvency) are great in concept, but building an entire model using just 2017 IPEDS financial data seems terribly flawed to me – one data point does not a trend make. Better to use several years of data and see how things are trending (and how much volatility there is).

As someone who has been a provost, and now a parent, I certainly wanted to know the financial health of the college where my son is now a freshman, and knew the questions to ask. I know the folks at Edmit and have been supportive of their work, but was only made aware of the current project when I read about it in InsideHigherEd yesterday. I have mixed feelings – accreditors are not really doing the job of keeping track of struggling institutions, and having been at a struggling college, I am concerned that students and their parents aren’t getting a true picture of its financial viability. I would like to see some kind of accountability out there, so that trustees and administrators take more responsibility for keeping their institutions healthy.

I’m not sure that I agree with distilling financial viability to one number or grade, there needs to be an ongoing analysis – for example, this Forbes report from two years ago is problematic looking at it today…

https://www.forbes.com/sites/schifrin/2017/08/02/2017-college-financial-grades-how-fit-is-your-school/#71eee4107d68

Lewis and Clark College gets a C, the same grade as Menlo College (where I was provost) and I know that L & C is in much better shape in terms of their endowment and fundraising, partly because they got a new president a few years ago who has been bringing in $20 million a year…

Several opposing forces regarding sharing prognostications about the future of institutions of higher education are described well in this article.

The one that caught my eye the most was:

“Further, the Edmit group argues that students should have access to such information, given the important decisions they make about attendance. I might put it another way. If someone attends a college and it collapses during or after their studies, wouldn’t they have preferred to have known the risks ahead of time? Try this question on for size: is it unethical to block access to such reports from students deciding where to enroll?”

Not to say that information that could alter the course of an institution’s success/continuance should definitely be shouted from the rooftops, but I’m glad to see that the rights of the student were considered in your collection of perspectives.

The question at the heart of the matter seems to be: Will publicizing this analysis:

a.) predict an event (a college closing),

b.) accelerate an inevitable event, or

c.) cause an event that would have been avoidable otherwise? (i.e. the Heisenberg effect)

If it’s c.), then publicizing the analysis is irresponsible.

If it’s a.) or b.) then not publicizing it is unfair to students, donors and taxpayers and therefore irresponsible. Predicting or accelerating the event prevents more damage to those stakeholders.

Complicating that question is that the analysis is made up of at least two parts — the facts and the interpretation or conclusion — so you don’t know which to attribute an event to. Are colleges threatened more by public awareness of the variables in the formula (expenses and assets ) or awareness of the conclusion that Edmint drew (closing is inevitable if expenses are greater than assets)?

It seems to me that some of these questions are unanswerable because it’s not possible to run both scenarios.

For example, consider the recent developments at WeWork in the run up to their planned stock market IPO. There was lots of reporting and analysis that did two things: 1.) Pointed out the facts of their finances and 2.) made an interpretation that it was a house of cards that investors would lose money on.

That commentary reached enough critical mass that bringing in more investors became impossible, the IPO never happened, and the value of the company started a downward spiral.

But it’s impossible to say for sure if that happened because of the facts, the interpretation of the facts or the wide publicity. Did the scrutiny of WeWork predict, accelerate or cause a decline in value?

Some in the organization would say that if they were given more time and the IPO went forward that they would have gotten over a threshold and achieved lift they had always planned on. Critics of WeWork would say that the longer the house of cards stood, the more investors would have gotten burned.

But you can’t answer that for sure, because the scenario has already been run one way and can’t be run another way.

You can do an imperfect comparison with a data set of one by pointing to Uber’s IPO and how public market investors are getting burned because they weren’t exposed to the same kind of scrutiny WeWork got. But the situations are too different to make much of that.

If the answers to the question isn’t knowable, then the issue comes down to responsibility. The more of a platform you have (a publisher like IHE) or are seen as an arbiter of truth (the SEC), the more responsibility you have to be very cautious. I think sitting on the information for now doesn’t mean sitting on it forever, so it makes sense for IHE to pull back the report as long as it’s in the spirit of continuing to consider it.

While I understand the view that “bad” publicity could become a self-fulfilling prophecy, there are at least two examples of colleges that closed and were broke back by alumni and other supporters (Sweet Briar and Antioch College). There have been other cases where news of financial challenges opened the pocket books of donors. An alumni group emerged that has tried to purchase Marlboro rather than have it merge. The evidence seems to suggest that announcing future financial challenges given the status quo can lead to changes.

Cynically, I wonder the degree to which executives are more concerned about the damage to their own careers. It might be harder to get a job somewhere else when it is known that your existing college is not so stable.

At the end of the day hope is not a strategy and denial is not just a river in Africa. We are sadly overbuilt in higher education, and the likely scenario is a reduction in capacity through mergers and closures. It would be better to have an orderly transition rather than the typical sudden announcement that leaves everyone shocked. The challenge is that colleges think the game is a prisoner’s dilemma where if no one admits to anything, all will be okay when really is a game of chicken and if someone does not make the first move it will be a giant wreck.

Christopher,

You are exactly right. We built way too many colleges, and now we’re reaping what we’ve sown. People were raising the excess capacity issue more than a century ago. We desperately need to get out ahead of the issue. There should be a government-appointed task force to start the process of merging and closing colleges, and start the orderly transition you speak of.